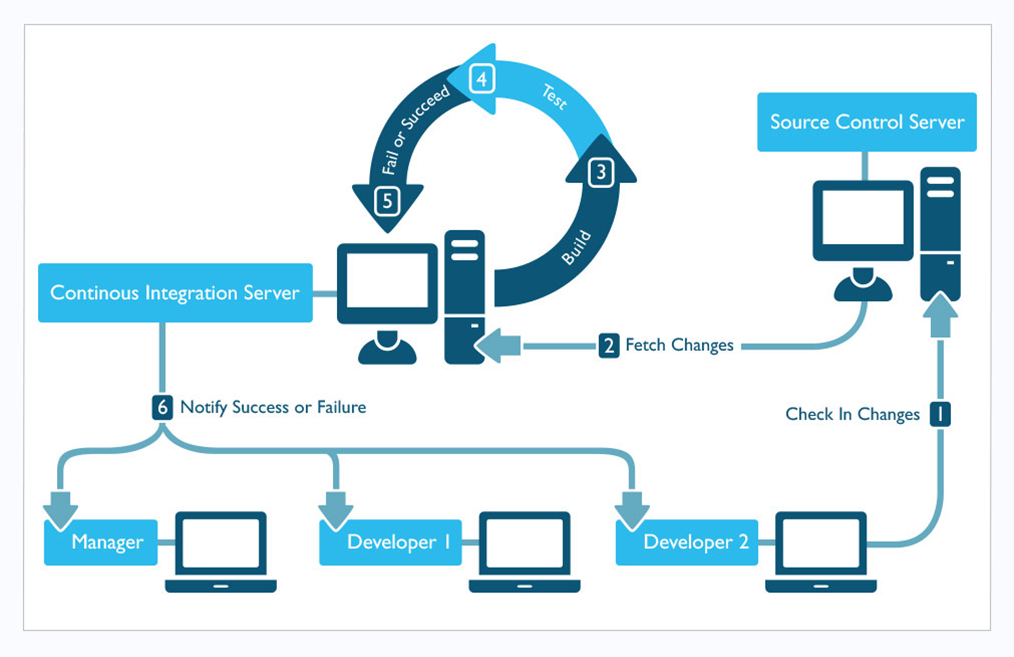

Continuous Integration (CI) implements continuous processes of applying quality control – small pieces of effort, applied frequently in an Agile Model. Continuous Integration aims to improve the quality of software, and to reduce the time taken to deliver it, by replacing the traditional practice of applying quality control after completing all development.

Continuous Delivery (CD) is the result of successful Continuous Integration, when updated software can be released to production at any time.

Software development methodologies

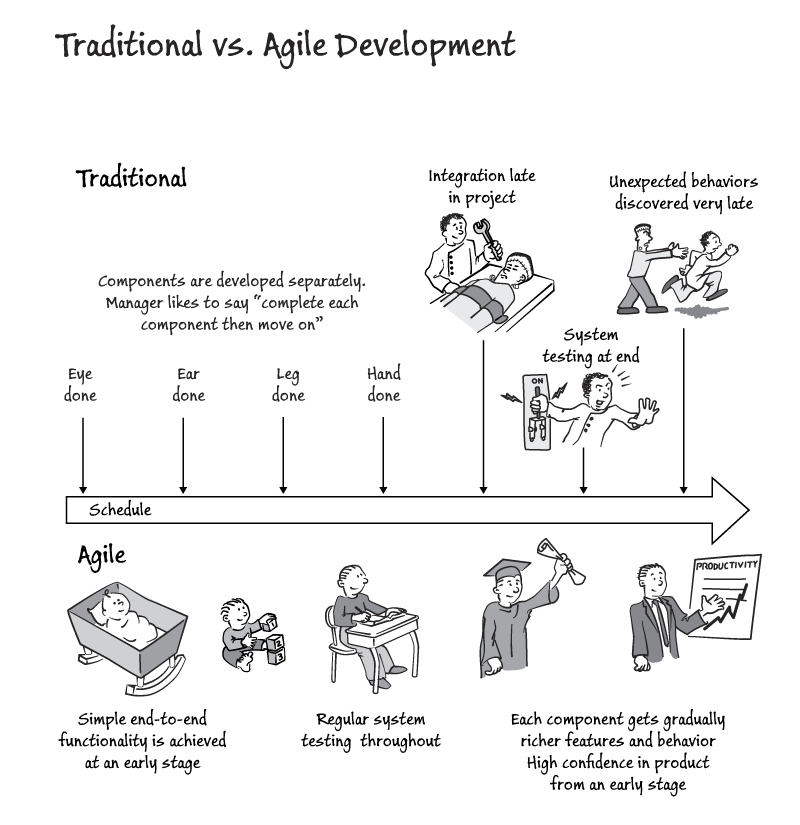

There are many different models for managing development projects, each with advantages and disadvantages. They fall into two major categories:

- Sequential development, in which a development team proceeds through a set of phases in order. In general, the team does not move to a new phase until the preceding phase is complete.

- Iterative development, in which a team cycles through a set of phases for a portion of the project, then moves on to another portion and repeats; this continues—often at a rapid pace—until the entire project is completed.

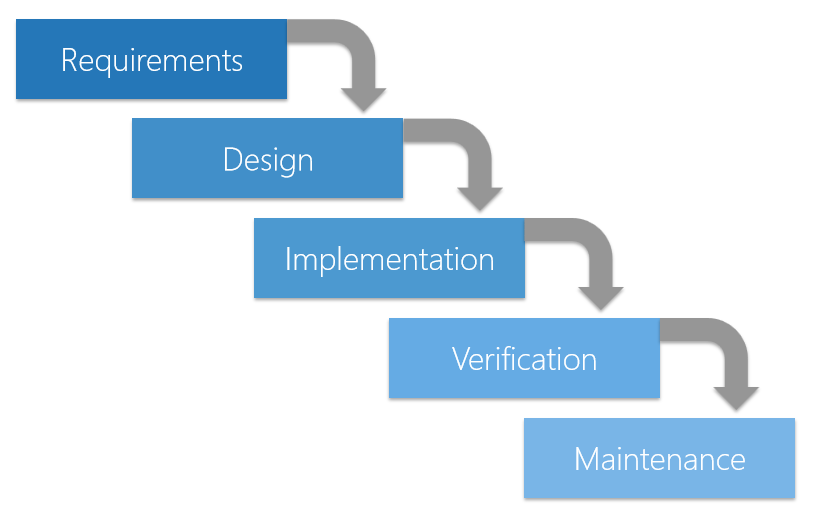

The past: The Waterfall Model

An example of sequential development is the Waterfall Model, named because it flows through a set of phases like water cascading down a waterfall. Characteristics include:

- The team starts with a detailed (and perhaps lengthy) design process, identifying the requirements of a program and designing a system to meet those requirements.

- After the design is complete, the team begins implementing code, testing each module as it goes.

- Next, the team integrates the individual modules and tests the overall system.

- Finally, the project is deployed and moved into a maintenance phase.

The Waterfall approach favours spending a lot of time on design and planning; coding often does not begin until all of the design details are resolved.

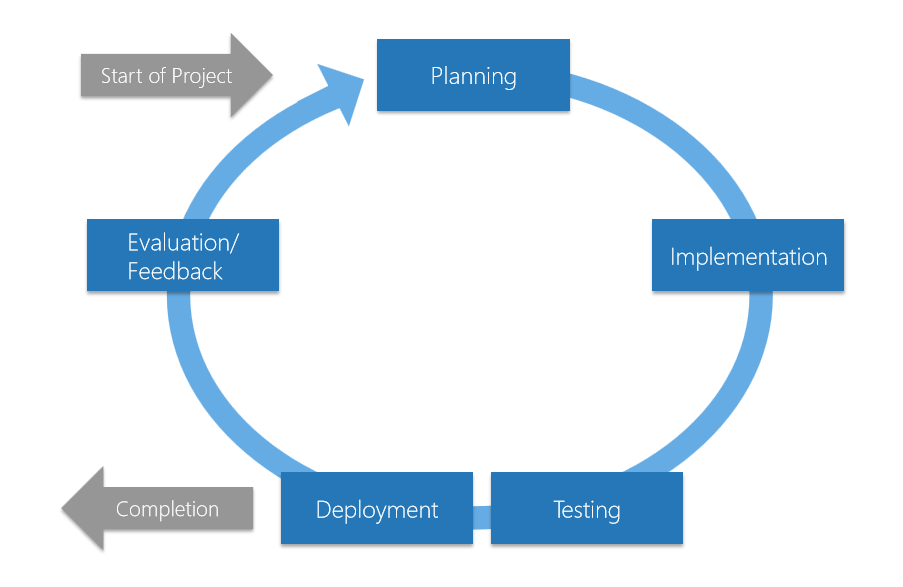

The future: The Agile Model

In Agile development, software is developed in incremental, rapid cycles. This results in small, incremental releases, with each release building on previous functionality.

Agile software development can follow various Agile frameworks that differ in complexity and required practices. The most widely used Agile framework is Scrum.

Each release is thoroughly tested, which ensures that all issues are addressed in the next iteration.

Agile emphasizes the ability to respond to change, rather than extensive planning. While planning is still important, the plan is subject to change as the project develops.

Projects are delivered “early and often”, giving customers/users the opportunity to provide feedback that can influence the next iteration.

Agile is about committing to an achievable amount of work, in an achievable amount of time, making the most of the resources and manpower available, and therefore achieving a continuous flow of value. And then it’s about taking a step back, assessing what’s been achieved well, and what could have been done better, and doing it again and doing it better.

Continuous Integration and Continuous Delivery

Continuous Integration and Continuous Delivery are the logical results of the permanent implementation of Agile.

- Automated tests are an important part of any Continuous Integration (CI) pipeline.

- Without automated tests there can be no successful CI pipeline.

- Automated tests are technically built as Unit Tests and automatically executed in every build.

- Automated (behaviour-driven) Acceptance Tests complement the traditional Unit Tests written by the developers. Developers should focus their Unit Tests on covering the program code, while test developers should focus their tests on the functionality, including GUI tests.

- Automated test developers need to be “specialised developers” that are part of the development team. There should be no separation of development teams and test teams.

Builds and deployments

The process for builds and deployments using a distributed version control system like Git in Continuous Integration / Continuous Delivery could be:

- The developer creates a new (temporary) branch out of the master branch and switches (checkout) into the new (temporary) branch. This new branch should be used for one change only with the intent of using the new branch for no longer than a few hours.

- The developer implements the change in the new branch.

- The developer runs a build process in the Development environment(s) with execution of the Unit Tests and the integration and automated Acceptance Tests (from Behaviour-Driven Development – BDD) that can run in the development environment(s). There can be one or multiple Development environments, depending on the requirements and complexity of the project.

- The developer commits the change (to save the new branch locally).

- The developer publishes the new branch (to upload the new branch in the master repository).

- The developer sends a pull request to a senior (lead) developer.

- The senior (lead) developer does a (code) review of the change.

- If the senior (lead) developer is satisfied with the change, then the senior (lead) developer merges the new branch into the main branch and triggers a build and deployment process into the pre-production or production environment (for Continuous Delivery).

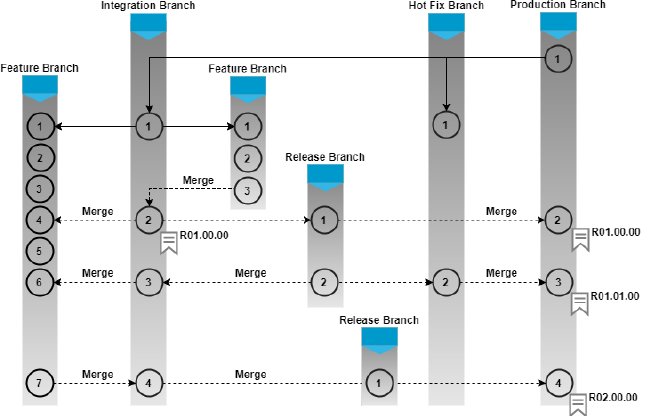

However, although the basics of this process will remain the same, most environments require a more sophisticated branching strategy.

The deployment environment for the last step depends on the maturity of the Quality Assurance (QA) process (such as the coverage achieved by the automated tests), and on the complexity of the project and the infrastructure and system dependencies. Although in an ideal world, the deployment would only be done once, it might be required to have multiple successive deployments (transports) to several environments (such as System Integration or QA Testing, User Acceptance Testing, and Production environments), each with their own quality gates for moving from one environment to the next. However, in any case, every build and deployment process should always be automated as much as possible to speed up the overall delivery process.

It might be a good idea to deploy to a separate new pre-production environment for every new version. This is particularly useful, if the pre-production environments can be built on the fly during the build and deployment process, for example by spinning up new separate virtual machines (in the cloud) or Docker containers for every new version.

Automated deployments

Successful Continuous Integration requires a version controlled automated build and deployment process to eliminate the problems with manual deployments:

Lack of consistency: Most enterprise level applications are developed by a team. It is likely that each team member will use a different operating system, or otherwise configure their machine differently from others. This means that the environment of each team members’ local machine will be different from each other, and by extension, from the production servers. Therefore, even if all tests pass locally on a team members’ machine, it does not guarantee that they will pass on production.

Lack of independence: When a few services depend on a shared library, they must all use the same version of the library.

Time consuming and error prone: Without automated deployments, every time we want a new environment (staging/production) or the same environment in multiple locations, we need to manually deploy a new environment and repeat the same steps to configure users, firewalls, and install the necessary packages. This produces two problems:

- Time-consuming: Manual setup can take anything from minutes to hours.

- Error-prone: Humans are prone to errors. Even if we have carried out the same steps hundreds of times, a few mistakes will creep in somewhere.

These issues scale with the complexity of the application and the deployment process. They may be manageable for small applications, but not for larger applications composed of dozens of micro-services.

Risky deployment: Because the job of server configuration, updating, building, and running an application can only happen at deployment time, there is more risk of things going wrong when deploying.

Difficult to maintain: Managing a server/environment does not stop after the application has been deployed. There will be software updates, and the application itself will be updated. When that happens, it will be required to manually enter into each server and apply the updates, which is, again, time consuming and error prone.

Downtime: Deploying an application on a single server means that there is a single point of failure (SPOF). This means that if we need to update the application and restart, the application will be unavailable during that time. Therefore, applications developed this way cannot guarantee high availability or reliability. It is much easier and safer to deploy multiple servers automatically.

Lack of version control: With the application code, if a defect was introduced and somehow slipped through the tests and got deployed on to production, we can simply rollback to the last known-good version. The same principles should apply to our environment as well.

If we changed the server configuration or upgraded a dependency that breaks the application, there is no quick and easy way to revert these changes. The worst case is when we indiscriminately upgrade multiple packages without first noting down the previous version, then we won’t even know how to revert the changes!

Opinion: Waterfall Development versus Agile Development

Software development life-cycles are getting shorter and new features need to be introduced faster than ever before. Customers become more demanding, and business has become a lot faster and competitive. This means that there is no more time for a traditional Waterfall Model with spending months on Requirements, Planning, Design, Programming, Testing and Release in one chain. There are huge risks associated with big releases, because the end product might no longer meet the changed requirements of the business/customers when it finally goes into production. Software development now needs to be done permanently (“continuously”) in fast cycles and deliver value faster with high quality results. This is the core of Agile software development. Delivering faster and releasing software more often also greatly reduces risks of delivering unsuitable or buggy software, and allows for faster adaptation to changes and constant re-evaluation. Failures happen faster and with less severity due to smaller change increments and therefore allow faster learning and adaptation. Culturally, failures should be seen as “purchased information” and therefore as improvement opportunities. There is an increased need for much closer permanent collaboration between Business, Developers, Testers, and Operational staff (i.e. they should all be at the same table right from the start of any project and talk directly to each other). This is what is now understood as the “DevOps” world, where both Development and Operational staff collaborate and share information as one team. Operational failures must result in automated tests to improve the whole software development life-cycle.

In this new world of software development, it is imperative that automated software testing gets tightly integrated into the software build/release process, and is not done after the build process as a separate last step when developers traditionally threw the software “over the wall” to the testers before the testers threw it (via the release process) “over the wall” to the operational staff. There should be no more separation between developers and (automated) testers. Ideally (in DevOps), there should also be no wall between the development/test and the operational teams. Automated software testing must now be built directly into the software build process and automated software developers must be part of the development team. Developers should write Unit tests to cover their code (white box testing) and automated testers should write tests to cover the business functionality (black box testing). Not integrating automated testing into the build/release process would mean sticking to the Waterfall Model of software development. Test automation on a basically finished release hardly ever produces a positive return on investment.

Software testing needs to change and that as a long term target, all possible tests should be automated. This replaces the traditional thinking that the majority of testing should be manual testing.

As a good example, Google currently runs over 100 million (!) automated tests against their software per day. Automated tests give them the ability and the confidence to innovate, improve and release software continuously. Eran Messeri from Google summarised the true value of automated tests perfectly: “Automated tests transform fear into boredom”.